For Political Cartoonists, the Irony Was That Facebook Didn’t Recognize Irony

As Facebook has become more active at moderating political speech, it has had trouble dealing with satire.

Since 2013, Matt Bors has made a living as a left-leaning cartoonist on the internet. His site, The Nib, runs cartoons from him and other contributors that regularly skewer right-wing movements and conservatives with political commentary steeped in irony.

One cartoon in December took aim at the Proud Boys, a far-right extremist group. With tongue planted firmly in cheek, Mr. Bors titled it “Boys Will Be Boys” and depicted a recruitment where new Proud Boys were trained to be “stabby guys” and to “yell slurs at teenagers” while playing video games.

Days later, Facebook sent Mr. Bors a message saying that it had removed “Boys Will Be Boys” from his Facebook page for “advocating violence” and that he was on probation for violating its content policies.

It wasn’t the first time that Facebook had dinged him. Last year, the company briefly took down another Nib cartoon — an ironic critique of former President Donald J. Trump’s pandemic response, the substance of which supported wearing masks in public — for “spreading misinformation” about the coronavirus. Instagram, which Facebook owns, removed one of his sardonic antiviolence cartoons in 2019 because, the photo-sharing app said, it promoted violence.

What Mr. Bors encountered was the result of two opposing forces unfolding at Facebook. In recent years, the company has become more proactive at restricting certain kinds of political speech, clamping down on posts about fringe extremist groups and on calls for violence. In January, Facebook barred Mr. Trump from posting on its site altogether after he incited a crowd that stormed the U.S. Capitol.

At the same time, misinformation researchers said, Facebook has had trouble identifying the slipperiest and subtlest of political content: satire. While satire and irony are common in everyday speech, the company’s artificial intelligence systems — and even its human moderators — can have difficulty distinguishing them. That’s because such discourse relies on nuance, implication, exaggeration and parody to make a point.

That means Facebook has sometimes misunderstood the intent of political cartoons, leading to takedowns. The company has acknowledged that some of the cartoons it expunged — including those from Mr. Bors — were removed by mistake and later reinstated them.

“If social media companies are going to take on the responsibility of finally regulating incitement, conspiracies and hate speech, then they are going to have to develop some literacy around satire,” Mr. Bors, 37, said in an interview.

Emerson T. Brooking, a resident fellow for the Atlantic Council who studies digital platforms, said Facebook “does not have a good answer for satire because a good answer doesn’t exist.” Satire shows the limits of a content moderation policy and may mean that a social media company needs to become more hands-on to identify that type of speech, he added.

Many of the political cartoonists whose commentary was taken down by Facebook were left-leaning, in a sign of how the social network has sometimes clipped liberal voices. Conservatives have previously accused Facebook and other internet platforms of suppressing only right-wing views.

In a statement, Facebook did not address whether it has trouble spotting satire. Instead, the company said it made room for satirical content — but only up to a point. Posts about hate groups and extremist content, it said, are allowed only if the posts clearly condemn or neutrally discuss them, because the risk for real-world harm is otherwise too great.

Facebook’s struggles to moderate content across its core social network, Instagram, Messenger and WhatsApp have been well documented. After Russians manipulated the platform before the 2016 presidential election by spreading inflammatory posts, the company recruited thousands of third-party moderators to prevent a recurrence. It also developed sophisticated algorithms to sift through content.

Facebook also created a process so that only verified buyers could purchase political ads, and instituted policies against hate speech to limit posts that contained anti-Semitic or white supremacist content.

Last year, Facebook said it had stopped more than 2.2 million political ad submissions that had not yet been verified and that targeted U.S. users. It also cracked down on the conspiracy group QAnon and the Proud Boys, removed vaccine misinformation, and displayed warnings on more than 150 million pieces of content viewed in the United States that third-party fact checkers debunked.

But satire kept popping up as a blind spot. In 2019 and 2020, Facebook often dealt with far-right misinformation sites that used “satire” claims to protect their presence on the platform, Mr. Brooking said. For example, The Babylon Bee, a right-leaning site, frequently trafficked in misinformation under the guise of satire.

“At a point, I suspect Facebook got tired of this dance and adopted a more aggressive posture,” Mr. Brooking said.

Political cartoons that appeared in non-English-speaking countries and contained sociopolitical humor and irony specific to certain regions also were tricky for Facebook to handle, misinformation researchers said.

That has caused fallout among many political cartoonists. One is Ed Hall in northern Florida, whose independent work regularly appears in North American and European newspapers.

When Prime Minister Benjamin Netanyahu said in 2019 that he would bar two congresswomen — critics of Israel’s treatment of Palestinians — from visiting the country, Mr. Hall drew a cartoon showing a sign affixed to barbed wire that read, in German, “Jews are not welcome here.” He added a line of text addressing Mr. Netanyahu: “Hey Bibi, did you forget something?”

Mr. Hall said his intent was to draw an analogy between how Mr. Netanyahu was treating the U.S. representatives and Nazi Germany. Facebook took the cartoon down shortly after it was posted, saying it violated its standards on hate speech.

“If algorithms are making these decisions based solely upon words that pop up on a feed, then that is not a catalyst for fair or measured decisions when it comes to free speech,” Mr. Hall said.

Adam Zyglis, a nationally syndicated political cartoonist for The Buffalo News, was also caught in Facebook’s cross hairs.

After the storming of the Capitol in January, Mr. Zyglis drew a cartoon of Mr. Trump’s face on a sow’s body, with a number of Mr. Trump’s “supporters” shown as piglets wearing MAGA hats and carrying Confederate flags. The cartoon was a condemnation of how Mr. Trump had fed his supporters violent speech and hateful messaging, Mr. Zyglis said.

Facebook removed the cartoon for promoting violence. Mr. Zyglis guessed that was because one of the flags in the comic included the phrase “Hang Mike Pence,” which Mr. Trump’s supporters had chanted about the vice president during the riot. Another supporter piglet carried a noose, an item that was also present at the event.

“Those of us speaking truth to power are being caught in the net intended to capture hate speech,” Mr. Zyglis said.

For Mr. Bors, who lives in Ontario, the issue with Facebook is existential. While his main source of income is paid memberships to The Nib and book sales on his personal site, he gets most of his traffic and new readership through Facebook and Instagram.

Mr. Bors said losing his Facebook page would cost him 60 percent of his readership.

The takedowns, which have resulted in “strikes” against his Facebook page, could upend that. If he accumulates more strikes, his page could be erased, something that Mr. Bors said would cut 60 percent of his readership.

“Removing someone from social media can end their career these days, so you need a process that distinguishes incitement of violence from a satire of these very groups doing the incitement,” he said.

Mr. Bors said he had also heard from the Proud Boys. A group of them recently organized on the messaging chat app Telegram to mass-report his critical cartoons to Facebook for violating the site’s community standards, he said.

“You just wake up and find you’re in danger of being shut down because white nationalists were triggered by your comic,” he said

Facebook has sometimes recognized its errors and corrected them after he has made appeals, Mr. Bors said. But the back-and-forth and the potential for expulsion from the site have been frustrating and made him question his work, he said.

“Sometimes I do think about if a joke is worth it, or if it’s going to get us banned,” he said. “The problem with that is, where is the line on that kind of thinking? How will it affect my work in the long run?”

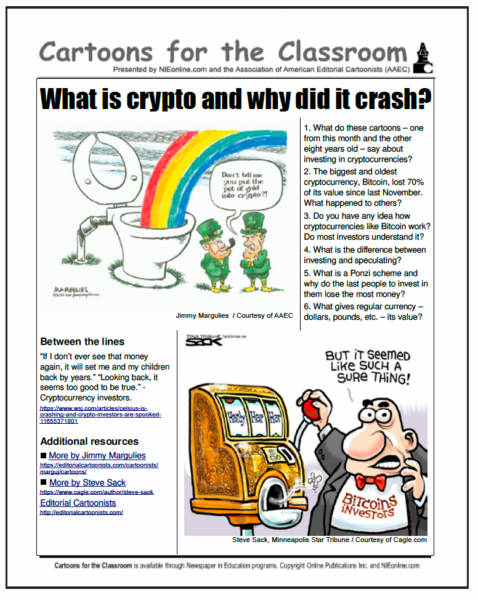

A weekly installment of the comic strip “Tom the Dancing Bug” by cartoonist Ruben Bolling, which is syndicated by Andrews McMeel Syndication and ran on the Nib, was taken down by Facebook. Used here with permission. (This cartoon ran in the newspaper in question after being grabbed and used without permission, attribution or compensation, which was unprofessional at best, theft at worst.)